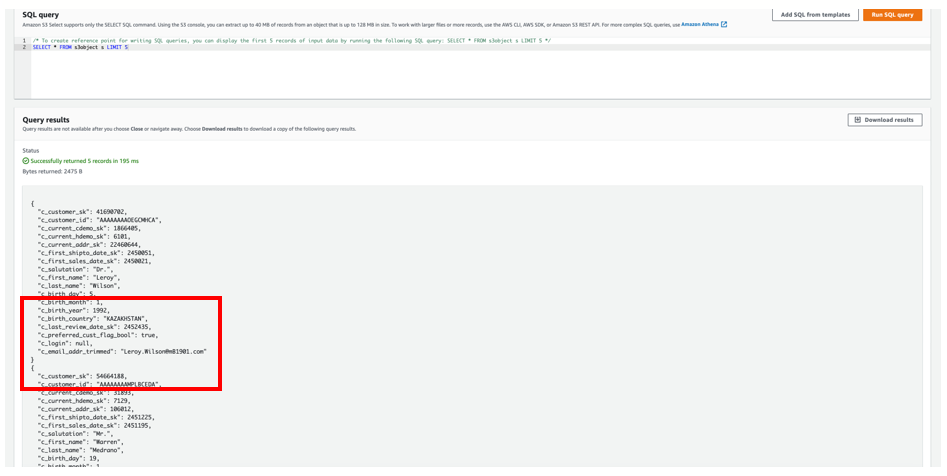

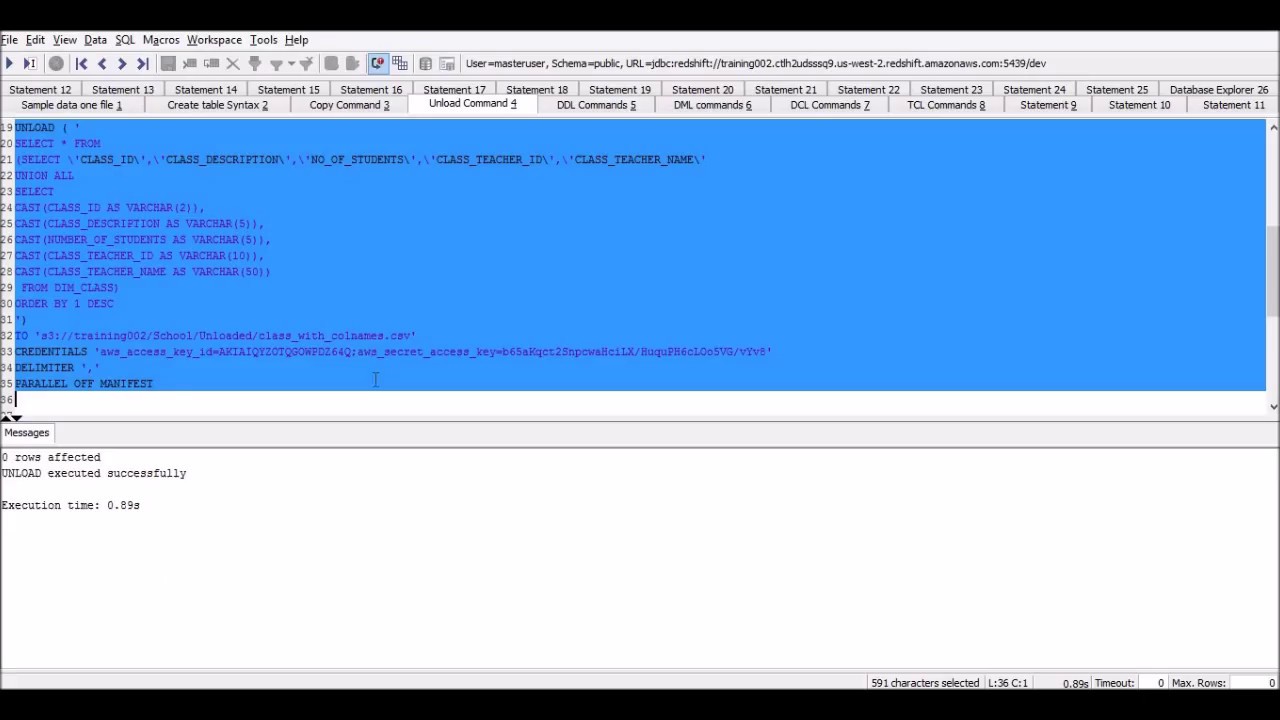

Like many companies, you probably use multiple data storage services, like a relations database, a data warehouse, and a data lake. □ Or you want to migrate it into another data storage service And, if you give your business users access to the specified buckets, you can cut yourself out as the middle man and give them self-serve access to the data they need, when they need it. ❤️ It plays an critical role across all your teams – empowering your teams to do their best work from a single source of truth.īecause most software that utilizes your data has a feature to import specific file types, you can use the UNLOAD query to export data out of Redshift in CSV or JSON (or other formats as needed). Regardless of the different types of software used across your org, data is at the heart of all your business operations. Maybe you want your data into other business toolsĭo you want to move your data from Redshift into other business apps (like Salesforce or HubSpot)? Within your organization, different business teams have different needs and expertise, so some teams might use Excel while others would be prone to using a CRM. Why do you want to unload your data from Redshift? Without a clear goal in mind, you’re susceptible to inefficiency traps that might delay your data operations. □ Why would you want to unload data from Redshift?īefore running your first UNLOAD command, consider your goal. Welcome to your complete Redshift data unloading guide. In this article, we’ve captured SQL, CLI, and SDK examples so you’re totally aware of what data unloading options are available to you (and what you need to pay extra-close attention to). □♂️Redshift’s UNLOAD command allows Redshift users to export data from a SQL query run in the data warehouse into an Amazon S3 bucket – essentially doing the reverse of the COPY command. Well, allow us to introduce you to its partner in crime: the UNLOAD command. Reference.If you’ve been around the Amazon Redshift block a time or two, you’re probably familiar with Redshift’s COPY command.

For more information, see Authorization parameters in the COPY command syntax UNLOAD command uses the same parameters the COPY command uses forĪuthorization.

The UNLOAD command needs authorization to write data to Amazon S3. REGION is required when the Amazon S3 bucket isn't in the same AWS RegionĪs the Amazon Redshift database. To use Amazon S3 client-side encryption, specify the ENCRYPTED option. For more information, see Protecting Data Using You can transparently download server-side encrypted files from yourīucket using either the Amazon S3 console or API. The COPYĬommand automatically reads server-side encrypted files during the load UNLOAD automatically creates encrypted files using Amazon S3 server-sideĮncryption (SSE), including the manifest file if MANIFEST is used. If MANIFEST is specified, the manifest file is written as follows: Part number to the specified name prefix as follows: UNLOAD writes one or more files per slice. ForĪdded security, UNLOAD connects to Amazon S3 using an HTTPS connection. If you use PARTITION BY, a forward slash (/) is automaticallyĪdded to the end of the name-prefix value if needed. The object names are prefixed with name-prefix. Writes the output file objects, including the manifest file if MANIFEST is The full path, including bucket name, to the location on Amazon S3 where Amazon Redshift ('select * from venue where venuestate=''NV''') TO 's3:// object-path/name-prefix' The permissions needed are similar to the COPY command.įor information about COPY command permissions, see Permissions to access other AWS Required privileges and permissionsįor the UNLOAD command to succeed, at least SELECT privilege on the data in the database is needed, along with permission to write to the Amazon S3 location. Such as Amazon Athena, Amazon EMR, and Amazon SageMaker.įor more information and example scenarios about using the UNLOAD command, see You can then analyze your data with Redshift Spectrum and other AWS services You to save data transformation and enrichment you have done in Amazon S3 into your Amazon S3 data Unload and consumes up to 6x less storage in Amazon S3, compared with text formats. You can unload the result of an Amazon Redshift query to your Amazon S3 data lake in Apache Parquet, anĮfficient open columnar storage format for analytics. Ensure that the S3 IP ranges are added to your allow list. You can manage the size of files on Amazon S3, and by extension the number of files, by You can also specify server-side encryption with anĪWS Key Management Service key (SSE-KMS) or client-side encryption with a customer managed key.īy default, the format of the unloaded file is pipe-delimited ( | ) text. Unloads the result of a query to one or more text, JSON, or Apache Parquet files on Amazon S3, usingĪmazon S3 server-side encryption (SSE-S3).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed